The Convergence of Operational and Analytical Platforms

For decades, enterprise data architectures separated operational systems from analytical platforms. Operational databases handled transactional workloads and application state, while analytical systems processed replicated copies of that data for reporting, business intelligence, and machine learning.

As organizations expanded their data environments, this separation introduced increasingly complex architectures involving:

- Operational databases

- CDC pipelines

- Event streaming platforms

- Data warehouses

- Analytics and machine learning platforms

The Lakehouse architecture began simplifying this model by unifying data engineering, analytics, and machine learning on a single platform.

Now a new wave of platform capabilities—including innovations such as Databricks Lakebase—suggests the next evolution may involve supporting operational and analytical workloads on a unified data platform. This shift represents a potential step toward architectures where applications, analytics, and AI systems interact with the same governed data foundation.

Although still very new, Lakebase signals a possible next step in the evolution of the Lakehouse, one that could significantly reshape how data architectures are designed.

The Traditional Data Architecture Problem

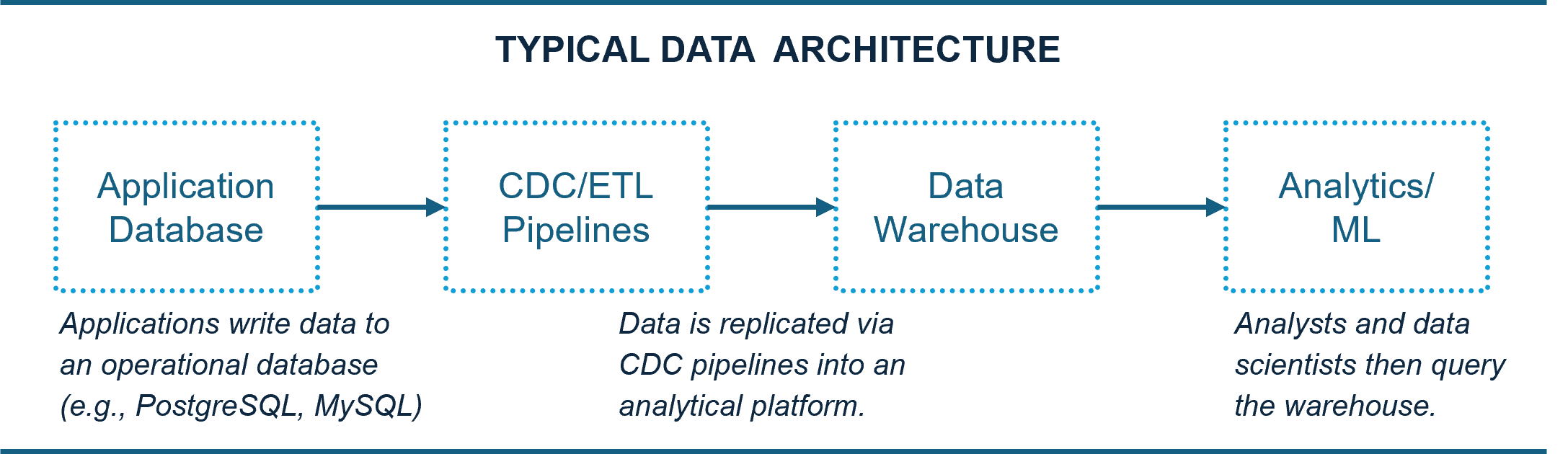

Most organizations today operate with a split architecture between operational systems and analytical platforms, similar to the following:

This architecture works, but it introduces several challenges:

- Complex replication pipelines

- Latency between operational and analytical data

- Duplicate data storage

- Multiple governance layers

- Increased infrastructure cost

As data volumes and real-time use cases grow, this separation becomes increasingly difficult to manage.

The Industry Shift Toward HTAP: The Next Step in Lakehouse Evolution

Hybrid transactional and analytical processing (HTAP) has long been an architectural goal: enabling operational workloads and analytical queries to operate on the same underlying data platform.

Historically, achieving HTAP required specialized databases or complex architectures that introduced operational trade-offs.

Emerging capabilities such as Lakebase suggest that modern Lakehouse platforms may begin supporting these workloads together, enabling applications, analytics, and machine learning systems to operate on the same data platform.

If this architectural direction continues, organizations may increasingly view the Lakehouse as a central platform for both operational data and advanced analytics.

How Lakebase Introduces a Potential Architectural Shift

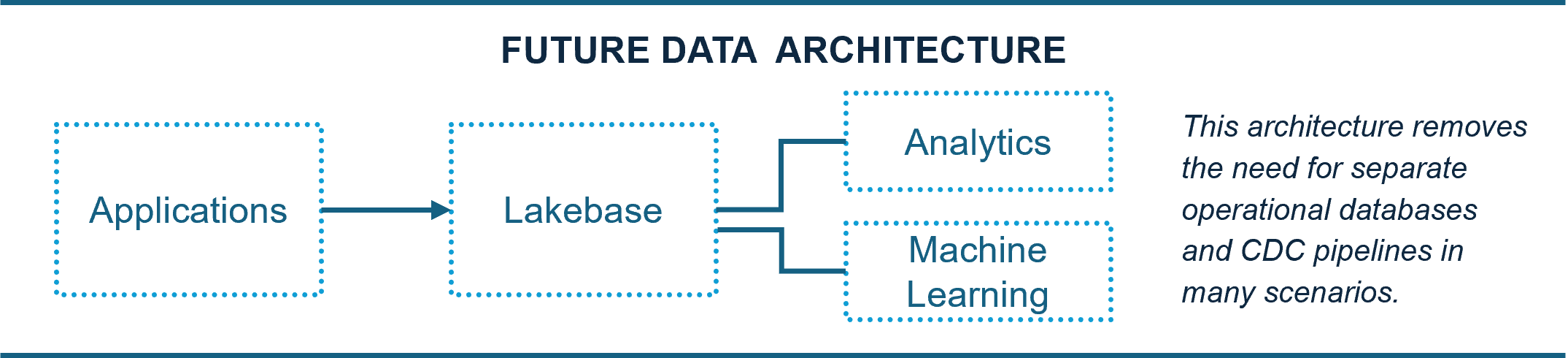

Lakebase aims to enable operational-style workloads directly on the Lakehouse. Rather than relying on a separate operational database, applications could write data directly to the Databricks platform while analytics and machine learning workloads access the same data. Future architecture could look like this:

This is strategically important because Databricks already has strong capabilities across several parts of the data stack:

- Data Engineering. Databricks remains a leader in large-scale data engineering through Apache Spark, Delta Lake, Structured Streaming, and Auto Loader. These capabilities make Databricks particularly strong for complex pipeline development.

- Machine Learning. Databricks has a mature ML ecosystem, including MLflow, Feature Store, and model training infrastructure

- Real-Time Data Processing. Databricks integrates deeply with streaming technologies and real-time ingestion mechanisms, enabling architectures that support both batch and streaming workloads on the same platform.

If Lakebase matures and delivers low-latency operational capabilities, the typical modern architecture could shift dramatically to an approach that eliminates several infrastructure layers while keeping all data within a single governed platform.

Why This Matters for Data Teams

The biggest benefit of a unified architecture is simplicity. By eliminating separate operational and analytical systems, organizations can reduce data duplication, synchronization pipelines, operational overhead, and system latency.

At the same time, analytics and machine learning workloads gain access to data immediately after it is produced. For teams building real-time analytics, AI-driven applications, or large-scale data platforms, this convergence could be transformative.

Databricks has released several important innovations in recent years:

- Photon significantly improved SQL query performance.

- Unity Catalog strengthened governance.

- Delta Lake provided ACID reliability for the Lakehouse.

Lakebase stands apart because it has the potential to change something deeper: the architecture itself. This could position Databricks from more than a data platform to the primary system of record for modern data-driven applications.

Building Enterprise Lakehouse Platforms

Implementing unified data platforms requires more than infrastructure capabilities. Organizations adopting Lakehouse architectures must also establish:

- Enterprise data governance frameworks

- Scalable data engineering pipelines

- Secure data-sharing architectures

- Platforms capable of supporting advanced analytics and AI workloads

Anika Systems guides organizations in designing enterprise Lakehouse platforms that integrate Databricks capabilities with enterprise data strategy, intelligent data pipelines, and secure governance frameworks.

This approach enables agencies and enterprises to scale analytics, machine learning, and AI applications on trusted and governed data platforms.

Final Thoughts

The long-term vision of Lakehouse has always been to unify analytics, machine learning, and data engineering on a single platform.

Lakebase suggests Databricks may be taking that vision one step further—toward a world where operational data and analytical workloads run on the same foundation.

The next phase of data architecture is becoming clear: the traditional architecture of separate operational databases, ETL pipelines, and warehouses may gradually give way to something much simpler:

One platform. One data architecture.

How will your organization adapt to these significant shifts in the data ecosystem? Organizations that successfully adopt these architectures will unlock a new generation of data-driven capabilities built on modern Lakehouse platforms to power intelligent applications.

About Anika Systems

Anika Systems delivers advanced technology solutions that drive efficiency and digital transformation for federal agencies. With deep expertise in AI Strategy and Digital Transformation, Intelligent Automation, AI and Machine Learning, Data Intelligence and Governance, and Enterprise Platforms and Engineering, we empower government agencies to accelerate mission outcomes with precision and agility.

We are a "show me" versus "tell me" company—delivering real, measurable impact through data-driven insights and scalable automation. At Anika Systems, we don't just keep up with change—we drive it.

To explore Anika Systems’ solutions, visit www.anikasystems.com/capabilities